That was helluva Q1 with all the EdTech and world developments. I feel like we all need a tea break :)

In the previous edition, we discussed how not every quarter is a rocket launch, and I would say that, to some extent, this quarter continues major developments already discussed in the series. Frankly, I do not like redundancies and stretching digital credentials to another Q sounds like the case, so we are going to explore new plots that may seem more niche at the moment.

Maybe because it is spring, I feel like EdTech and learning are finally upgrading from a confusing situationship (that survived st Val’s!!) to an official relationship. No more “you asleep” at 3 am after ghosting for a week and sloppy bar kisses only to play coworkers the next morning. We are now interoperable, more mindful about how we deal with AI infatuation, assess closer to real life with an open-book approach, and value actions over promises when it comes to accessibility.

Of course, there is no perfect relationship, so Q2 brings antitrends that do not deserve a second date. A la poubelle, medieval in-campus testing narrative!

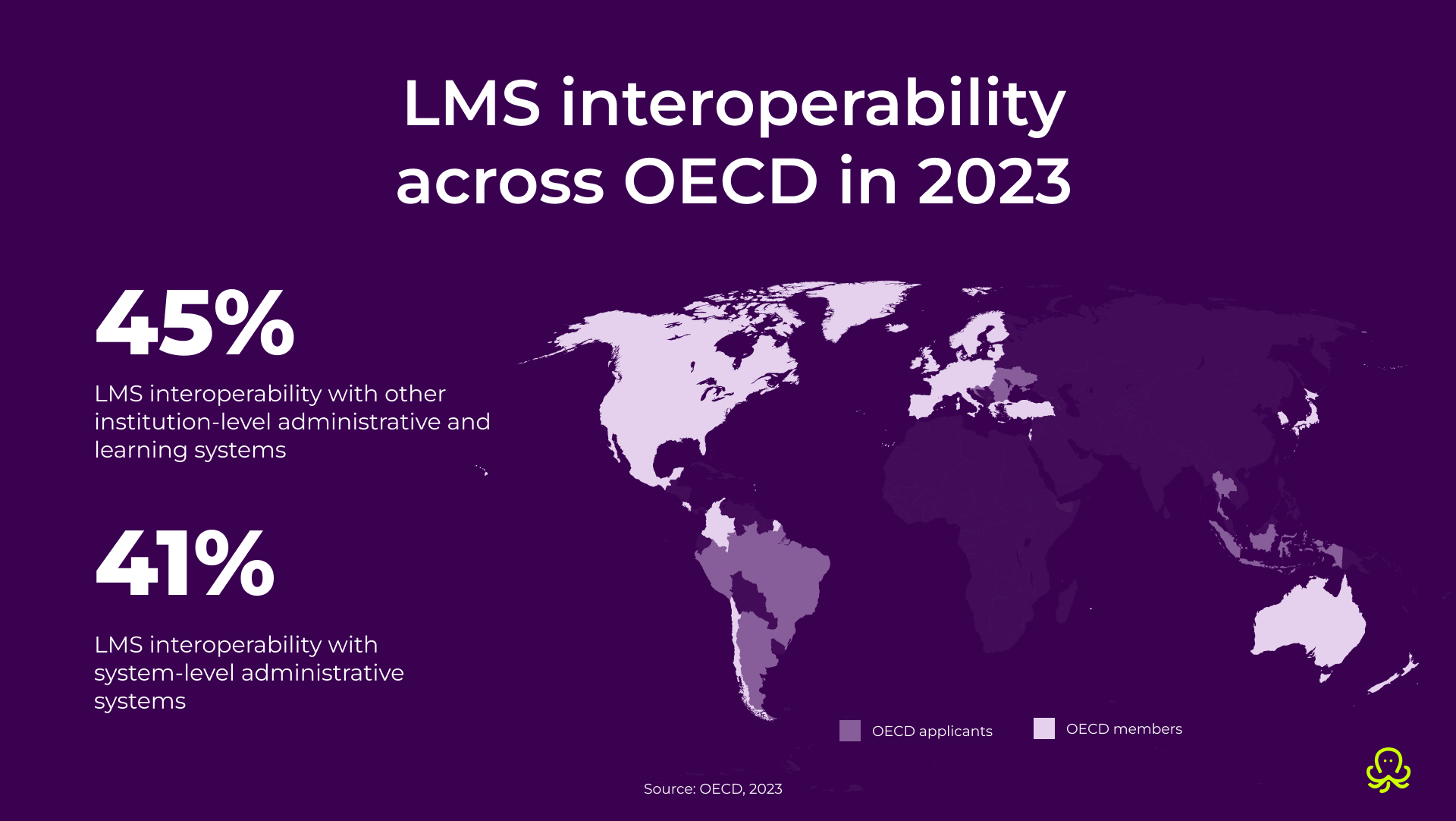

For years, education technology shopping had a very specific dating-app vibe: a shiny demo, a few impressive features, some AI sparkle on top, and everyone ignores the fact that the tool does not really support edtech ecosystem integration. Q2 2026 calls that a red flag.

Buyers are getting tired of products that look brilliant on their own but become high-maintenance the moment they meet student records, accessibility workflows, reporting requirements, and identity systems already in place. Oh, and don’t forget that they always need that one heroic, overworked, and underpaid admin to make this wonder waffle work.

In other words, interoperability is finally outweighing chemistry.

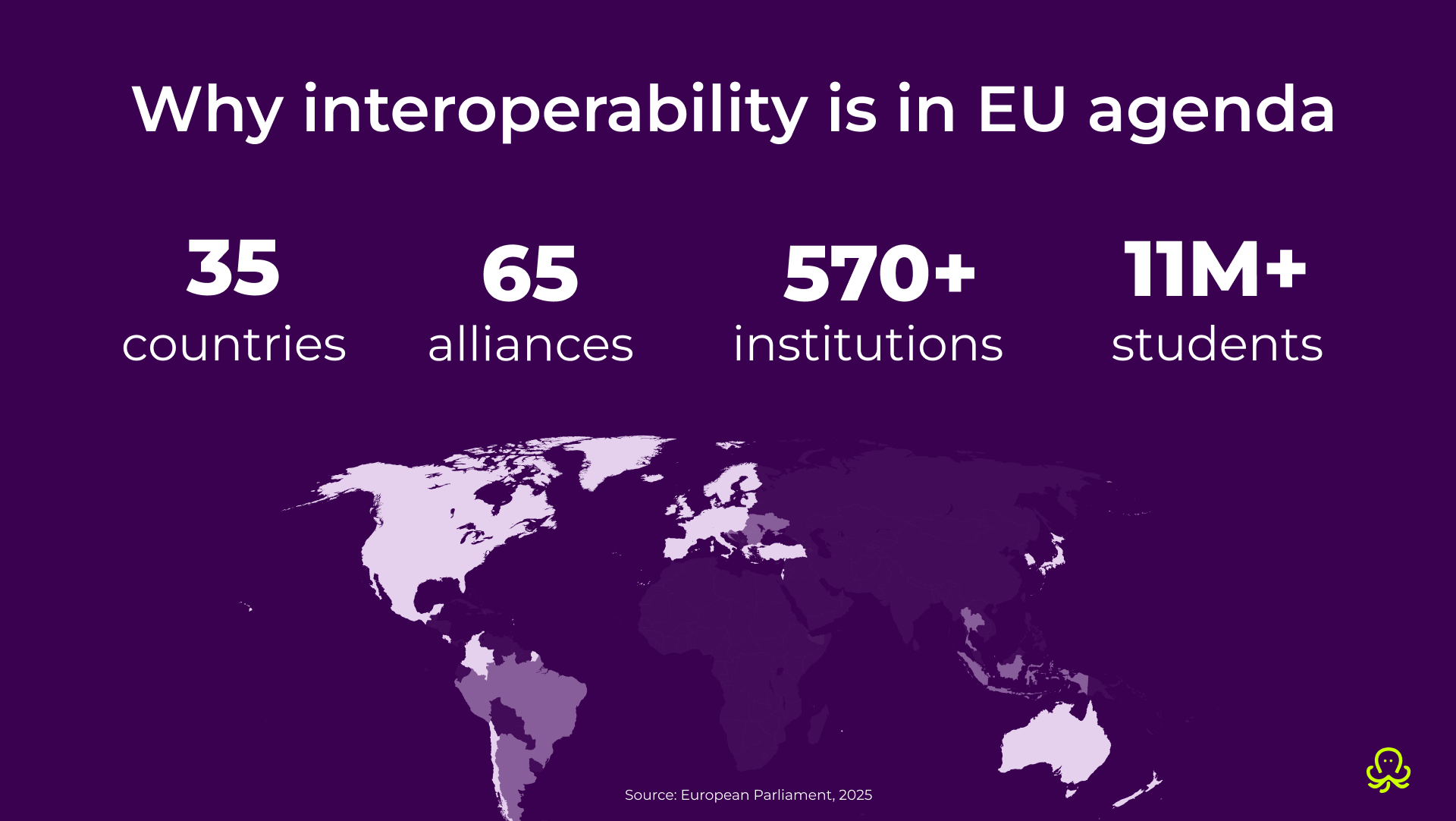

The Higher Education Interoperability Framework, developed by the European Digital Education Hub, is designed to make digital teaching and learning easier across Europe. Its reference architecture supports every step of the learner journey. Students can discover new opportunities, apply to multiple institutions, access digital tools, track their learning progress, earn credentials, and manage their online identities. The aim is to make cross-institution learning actually work as the EU moves toward virtual inter-university campuses.

A disconnected stack creates duplicate work, broken learner journeys, poor data quality, inaccessible handoffs, and compliance headaches. UNESCO, UNICEF, and the ITU now explicitly say public digital learning platforms should be built for integration, using open technical standards and secure data exchange so they can connect to registries, credentialing bodies, and other core education systems. The EU continues working on the Digital Identity Wallet, and interoperability is exactly the principle that enables it across member nations.

The best approach is always to be at the origins and act early, because fragmentation is already too expensive to tolerate and will only get costlier to catch up. Audit your ecosystem for hidden friction. Ask vendors about standards support, data portability, secure exchange, accessibility, and governance before you fall in love with features.

In practice, this trend is about education data interoperability, student information system integration, and the wider education systems modernization work that institutions can no longer postpone.

A product that only performs well in isolation is already a procurement risk, far from innovation.

OctoProctor features seamless integration with over 30 LMSs as well as SCORM-based exams. We are proudly plug-and-play, device-agnostic, and browser-based. No delays or friction on our side. We fit in EU mobility and interoperability frameworks without any policy exceptions or troubles :)

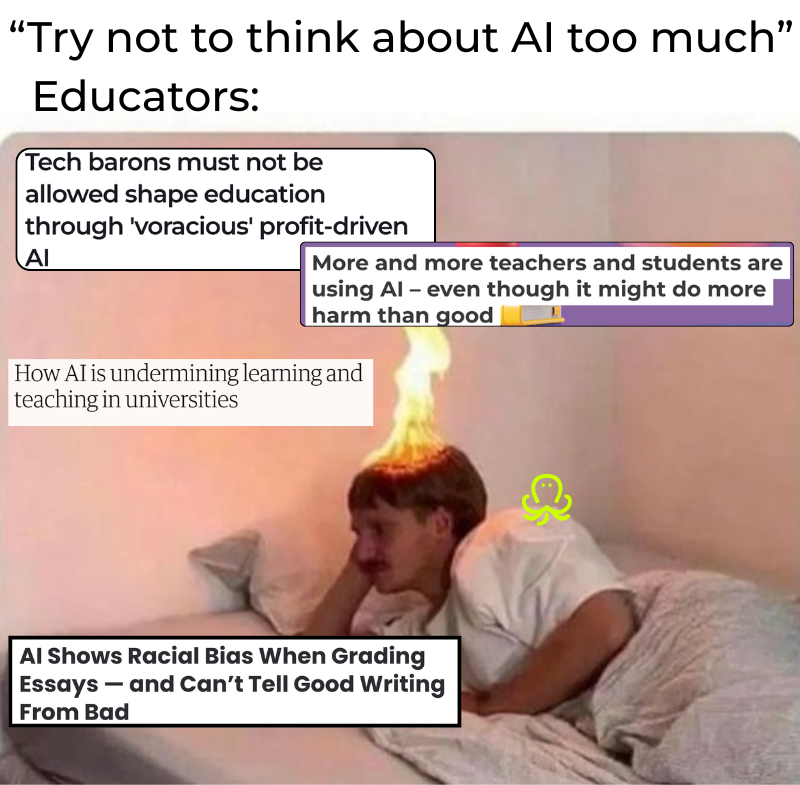

Responsible AI in education starts with the people expected to use it well. Surprise! Educators also fall victim to “fast AI,” and that is why AI training for educators is becoming a real buying and governance issue. The negative effects of the lack of brakes have been extensively covered by the media and academia.

AI literacy is not a new concept either; what’s new is that it finally gets an official status in Europe.

In March 2026, the European Commission released updated guidelines for teachers on the ethical use of AI and data, digital literacy, disinformation, and informatics education. UNESCO, although much earlier, also introduced AI competency frameworks for both teachers and students, while the OECD’s 2026 Digital Education Outlook advocates for more human-centered, purpose-built applications of GenAI in education.

Institutions will allocate more funds to educator enablement rather than just student-facing tools. Products that demand extensive staff effort or rely on vague policy improvisation will seem less effective compared to tools with transparent teacher controls, auditability, and explainable use cases. Product discovery will see more involvement with vendor materials, which will build stronger funnels, but arguably take more time to seal the deal.

LinkedIn rumminations on ethical AI use in classrooms are great for virtue signaling. Another legal push for AI policy in higher education and a broader post-AI education strategy is a win, given the significant artificial intelligence lobbying before the 2026 US midterms. I bet that you have met AI bros in the wild – overly enthusiastic proponents of generative AI that aggressively promote the technology, frequently dismissing ethical concerns, copyright issues, and the value of human labor. They are one of the reasons why general education needs to set limits until methods and models are proven safe and effective.

Treat educator AI readiness as a procurement category. Pro-AI literacy onboarding materials call for a tighter vendor-client relationship, classroom examples, acceptable-use guardrails, and role-based permissions. Institutions should also test their teaching staff’s AI literacy to detect any gaps and threats before developing an AI upskilling program and standards.

AI may support teaching and prep. AI learning assistants are a great example of what works, but they will do so even better with AI literacy in place. OctoProctor has emphasized that assessments need transparent rules, human-authored exams, and configurable monitoring. Our media and customer success initiatives share the latest frameworks, research, and use cases for the OctoProctor AI solution beyond invigilation mechanics to ensure high assessment standards.

I can already hear the eyebrow raise: in what universe are open-book exams an EdTech trend, let alone the future? Well, who said it is only a physical book?

This trend stops pretending knowledge lives in a vacuum. Open book can mean notes, standards, case materials, formulas, pre-seen business scenarios, or a tightly defined resource pack. It can also be a “slow AI” approach, and that alone can be a trap more challenging than navigating a few select PDFs.

And, of course, open-book exams can be conducted online, which is exactly why they belong in the conversation around online exam trends 2026 and digital assessment trends.

Online open-book and open-book, open-browser (OBOW) exams are formats we increasingly hear from both established and potential clients. Instead of being a somewhat alternative gimmick to proctored high-stakes exams, the discourse around open-book assessment has matured.

Trendsetting universities like Cornell, Indiana, and Trent openly frame it as a better fit for questions that test application, analysis, and synthesis rather than memorization. Oxford has maintained open-book online exams in its assessment mix and formalized rules around them, while the Chartered Institute of Management Accountants (CIMA) case study exams continue to depend on pre-seen materials that students must work with rather than memorize. Cambridge Assessment has also approached open-book, open-web assessment as a real-time design challenge for digital high-stakes testing.

One reason open-book formats are resurfacing in exam security trends is that they shift the focus from memorization policing to exam design in the generative AI age. Closed-book exam formats are easy to game even without cheating in a classical way. In other words, proctoring technology can improve and be made stricter, but it still doesn't guarantee long-lasting learning results, especially in an unfocused curriculum where cheating and genuine involvement are a part of prioritization.

Properly designed OBOW is attractive to advanced, high-ranking programs because it mimics real life. Multiple-choice tests do not test judgment, prioritization, interpretation, and transfer. Obviously, open book must not become “open ghostwriter.”

Start by deciding which materials are genuinely open and which aren’t. Create questions that go beyond simple copy-paste behavior: consider case variation, localized details, timed decisions, reflective justification, data interpretation, and personalized prompts. Lastly, safeguard the session. If the exam is important, you still need to confirm the registered learner took it, used only allowed materials, and didn’t delegate thinking to unauthorized tabs, tools, or helpers.

Open-book exams are not anti-proctoring. If anything, they make good proctoring more valuable.

Proctoring tracks the whole session, giving you both insights into each learner’s thinking process and how well your exam is actually designed. Proctoring systems such as OctoProctor also help block or flag access to prohibited sources while leaving approved materials intact. In other words, remote invigilation lets institutions enjoy the better assessment quality of open-book design without turning the exam into an honor-system free-for-all.

Finally, accessibility has stopped being an abstract “best practice” and has begun to influence procurement and delivery deadlines. For years, plenty of institutions treated it as we care, we are improving, please do not cancel us admire our statement. In 2026 Q2, accessibility is becoming a market filter, providing a legal and operational rationale for asking uncomfortable questions that many EdTechs will not have answers for.

In the US, the Department of Justice’s updated ADA Title II rule sets population-size-dependent deadlines of April 2026 or April 2027 for accessible web content and mobile apps for state and local governments, including many public colleges and universities. EDUCAUSE has responded by advocating for embedded digital accessibility and by directing institutions and vendors to tools like the Higher Education Community Vendor Assessment Toolkit (HECVAT), which now incorporates accessibility alongside privacy, security, and compliance.

The European Accessibility Act took effect on June 28, 2025, establishing uniform accessibility standards for a variety of products and services within the internal market. Accessible EU’s guidance and the Commission’s materials clarify that accessibility is now a key aspect of how digital products are expected to function across borders, including information communication technologies-related services. Additionally, since public-sector digital services in the EU are also influenced by the Web Accessibility Directive, buyers are increasingly seeking platforms that can withstand both legal scrutiny and real-world use. The Commission is also planning a wider public procurement revision in 2026.

First of all, people who require accessibility are not a minority in a way some like to believe. Being born able-bodied does not guarantee one will not require accessibility and disability status later in life.

Accessibility mandate touches LMS handoffs, exam portals, authentication screens, mobile camera flows, results dashboards, help widgets, file uploads, captions, alt text, keyboard-only navigation, color contrast, error messaging, and every other ugly little point on the accessibility mine field.

Buyers will increasingly ask vendors about WCAG alignment, app accessibility, captions, keyboard navigation, screen-reader compatibility, and exception handling. Bad UX is a compliance risk that directly undermines exam credibility, no matter how great the learning materials and approach to test design were.

Accessibility in online learning increasingly overlaps with universal design for learning (UDL): clear instructions, flexible access, usable interfaces, and fewer avoidable barriers from the start. So, it is highly likely that your instructional design has a strong foundation for accessible e-learning.

Procurement must treat accessibility the way it already treats security questionnaires. Ask vendors whether there is a current accessibility conformance report. Which WCAG level is the vendor testing against? Are mobile workflows included, or just desktop? How does the platform behave with screen readers, keyboard-only use, captions, zoom, color-blind-safe status cues, and cognitive accessibility basics such as clear error messaging? If remediation is needed, who owns it?

Speaking of which, remediation plans are becoming table stakes both for new and existing partnerships. LMSs and exam vendors will need to systematically step up their accessibility game.

This trend directly aligns with our ongoing efforts. In v5.9.0 and v5.8.0, OctoProctor introduced enhanced test-taker and admin accessibility features, such as programmatically associated labels in login fields, clearer visual cues with descriptive alt text and text labels, and streamlined screen-reader navigation. We are also actively exploring humane exam proctoring frameworks that support neurodiverse classrooms. For us, accessibility is both a technical and ideological commitment, and it is no longer something institutions can afford to admire from afar.

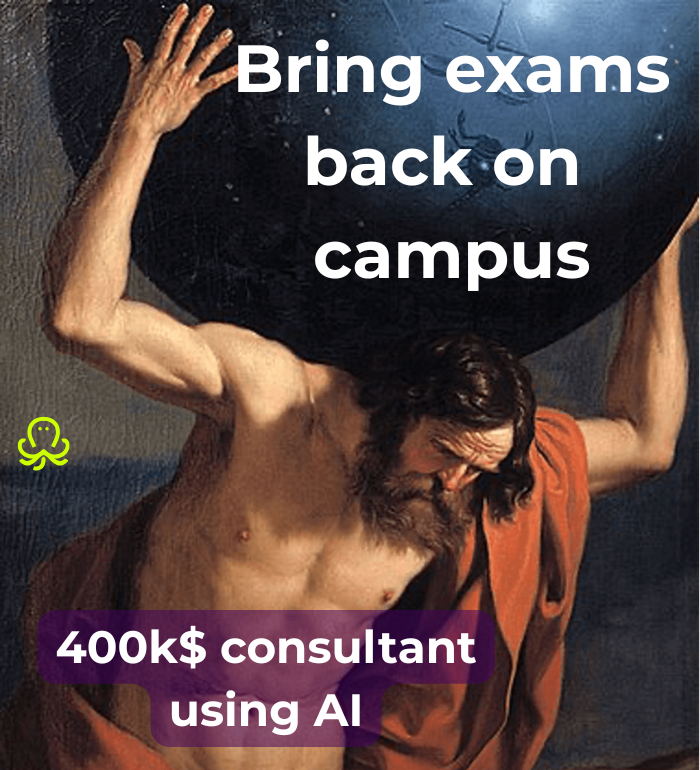

Western countries like Australia are seeing a push to depart from the remote. A step backward for corporate and tertiary education, bringing everyone back on campus promises to curb AI cheating.

At the end of Q1, the world suddenly got up on the wrong side of the AI bed. Now, academe is suspicious and needs its students back at unis, yesterday. The same goes for the workspace. It is ironic that pricey consultants are actively pushing the back-on-campus narrative, when Deloitte and KPMG are passing unverified AI hallucinations as costly consulting. Proctoring exams are largely absent from the said discourse as a more effective, personalized alternative to de-onlining learning.

Radicalization of the discourse around the exam already prompts a witch hunt. Test takers are viewed as guilty without trial, causing immense stress. Going back to paper and pen is a costly, inflexible move that will endanger hybrid and remote cohorts.

Learn how to read cheating statistics. Focus on contextualizing flags instead of taking everything at face value. Explore a continuous assessment approach. Adaptive testing with personalized questions is a strong combo that will prevent major leaks and hard-to-detect cheating. Discuss cheating with test takers openly: what counts as one, why, and what are the consequences. Research proctoring solutions that are configurable to your unique needs. Pilot different settings to find what is optimal for your exam design. Finally, run post-exam test taker surveys to see how they perceived the experience.

There is no right or wrong side in AI discourse. It exists today and will continue to do so unless we encounter a Terminator or Transcendence scenario (not sure what’s worse – losing the digital or flopping a film with such a monumental cast).

Have students never-ever cheated? Nope. And the more we measure, the higher the reported cheating flags will be. The principle is exactly the same as with healthcare. It’s not that the world suddenly has an “epidemic” of neurodivergency; humanity simply hasn't measured or diagnosed it properly for decades.

You can't rely solely on a 1:1 test taker-to-proctor ratio to prevent cheating, as the internet warriors paint this shift. Not in this economy. And what are the actual gains? But AI or auto proctoring actually gives you that 1:1, auditable, and appealable experience. Cost-effective, scalable. Can be done asynchronously, no need to drag everyone into a test center.

Q2 2026 has been taken on with pragmatism, and I couldn’t be happier. Being infatuated with something is great, but mass education needs platonic love. Passion burns. AI and too-good-to-be-true start-ups have definitely left a few painful marks on all of us to realize that it is continuity that works.

Some tech simply needs time to bloom. For inventors to be wiser and more experienced, for technical capabilities to catch up, and for the world to be ready to embrace it. Our founder, Anton, also sets ideas aside, and some take a couple of years to materialize. Being avant-garde is never bad. But rushing it to the public sector is.

Ground yourself and observe, feel the currents. Slow down. Spring has arrived. The world is built on interoperability, and you are yet another, wonderfully perfect part of it. Reconnect with the cycle, and you will find the answers to each trend, whose season is timed just right 🌸

Whether it is a trend like proctored remote open-book testing or a tried-and-tested approach, OctoProctor will fit with them equally naturally. Configurable to match your unique vision and cohort, our invigilation solution is ready when you are.

Book a demoThe 2026 Q2 shifts are a result of earlier EdTech hype. The chaos is calming down, and we are beginning to see symbiotic learning systems designed for today that balance the experimental driving forces. Education now focuses on interoperability over point solutions, educator AI literacy as a procurement issue rather than chasing the most futuristic-sounding solution, open-book online exams leveling the playing field against AI cheating, and accessibility deadlines reshaping the checkbox EdTech buying.

Because institutions are tired of buying tools that only look good in an isolated demo. In 2026, humanity wants systems that create strong reporting and are stackable without breaking at scale. Broken learner journey, overspending on dozens of standalone tools, managing them – it all creates avoidable friction and breaks trust. Agile modernity simply cannot afford not to be interoperable anymore.

Yes, especially in advanced programs, certification, and other contexts where shallow memorization was never enough. Open-book exams are attractive because they can test judgment, interpretation, and application more realistically than many closed-book formats. The real open-book exam benefits appear when institutions combine better task design with assessment integrity solutions such as OctoProctor that verify identity, protect allowed-resource rules, and preserve due process.

Open-book does not mean open everything. Remote proctoring software can help institutions verify identity, monitor the full exam session, and block or flag access to unauthorized websites, tools, or outside help while still allowing approved materials. The advanced use of proctoring to identify where test takers experienced issues with the exam and how exactly they approached the open-book format to overcome those challenges provides exam owners with a way to improve assessment and education quality that isn't available in uninvigilated OBOWs or traditional ABCs. MBAs, engineering, medical, and nursing schools can significantly benefit from such insights in their training.

Dusty vendor website statements or pledges are no longer enough to make procurement cross accessibility out of their checklist. Public institutions in the US and Europe are facing increasingly stringent legal requirements for accessible web content, apps, digital services, and exam workflows. That means procurement teams are more likely to ask for WCAG alignment, keyboard navigation, screen-reader compatibility, mobile accessibility, captions, and remediation plans before they sign or renew a contract.

An answer to the classical “does it catch AI?” is yes, proctoring can block AI usage. 2026, however, necessitates deeper questions. Does the online proctoring platform integrate cleanly with the LMS? Can it support open-book and closed-book exam formats? Is it accessible for neurodiverse learners, admins, proctors, and test takers using assistive tech? Does it offer configurable rules, clear audit trails, and different supervision models such as AI, automated, or live proctoring? Are vendors’ communications transparent, and do they offer support and insights beyond a one-size-fits-all onboarding? Good proctoring software supports assessment quality beyond quantitative metrics.

Beyond surveillance, proctoring means moving away from raw flag counts as the whole story. It focuses on context, clear rules, proportionate supervision, appealable evidence, and trust-based assessment models that protect integrity without turning every test taker into a suspect.

The best student-centered learning technology is usable by default, not only after complaints or remediation. That is why accessibility in online learning increasingly overlaps with universal design for learning, inclusive procurement, and the future of higher education learning more broadly.

If educators do not understand how an AI tool works and what boundaries to set in a world where roughly every second EdTech startup includes something AI for the hype – institutions end up buying products they cannot govern properly. Educator AI literacy is becoming part of vendor evaluation: buyers want onboarding, role-based controls, explainable use cases, and guardrails. AI literacy prompts the vendor-client relationship to become less transactional.